The Living Postcard

Year

2026

Role

Tools

Google Gemini

Google Antigravity

Netlify

Project Type

Vibe-Coded Web App

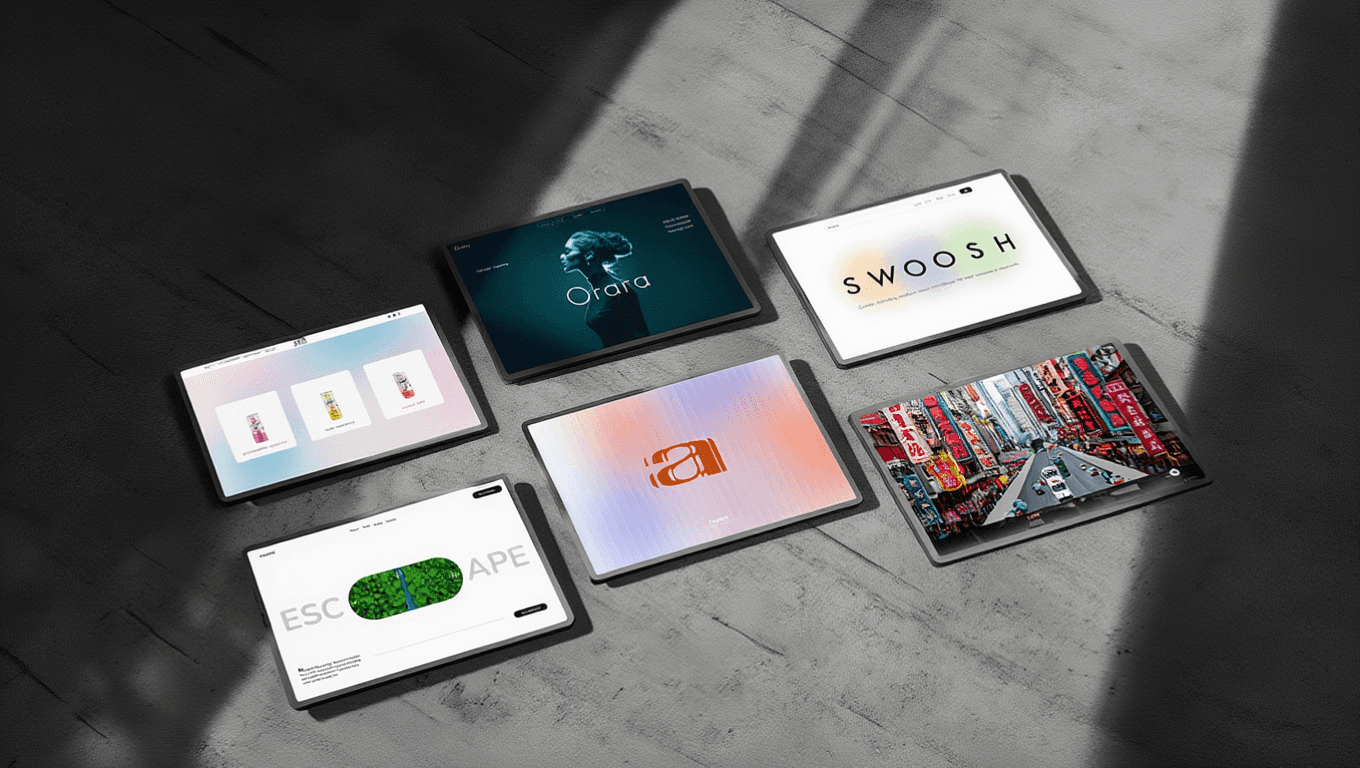

The Living Postcard is a digital first gifting app built through vibe coding. It captures the creative process- via on-screen drawing or "air painting"- and replays it for the recipient as a cinematic ghost-stroke animation that reveals the final painting alongside a personalized message and birth-month bouquet.

CONTEXT

Digital greetings often feel flat - they're quick to send and easy to ignore, missing the warmth and intention that make a physical card feel meaningful. The goal behind this project was to reframe the digital gift as a performance: something that captures the human effort, care, and artistry behind the gesture, not just the final image. At its heart, it's personal - inspired by a desire to close the distance with family members abroad, and to create moments of high-emotion connection that feel genuinely intimate, even across screens.

EXPERIENCE LOGIC

This project was built using a high-velocity Vibe Coding workflow. I acted as the Experience Architect, using Google Gemini and Antigravity to bridge the gap between complex computer vision and fluid UI motion.

Act I: The Creator’s Studio

The user is given a dual-mode canvas to craft their message:

Act II: The Recipient’s Performance

The recipient experiences a choreographed "unboxing" of the digital gift:

FEATURE SPOTLIGHT

The Ghost-Stroke Engine

I directed Google Gemini to structure a custom playback logic that "records" the array of coordinates and replays them with a temporal "ghost" effect.

3D Spatial Transition

I utilized GSAP and Framer motion to choreograph a transition that feels weighted and tactile, giving the digital card a sense of physical depth.

TECHNICAL STACK

REFLECTION

As a designer without a formal coding background, Vibe Coding allowed me to move directly from visualization to execution. By using AI to handle the complex syntax of hand-tracking and database logic, I was able to focus entirely on the "vibe"—refining the easing curves and the cinematic timing of the reveal. It proves that AI-native workflows allow a single designer to build high-emotion, multimodal experiences in days, not weeks.